Deep Learning Tutorial

In this deep learning tutorial page, we will learn about, what is deep learning?, example of deep learning, architectures, types of deep learning networks, feed forward neural network, recurrent neural network, convolutional neural network, restricted boltzmann machine, auto-encoders, deep learning applications, limitations, advantages, and disadvantages.

What is Deep Learning?

Deep learning, a division of artificial intelligence, which is the foundation of deep learning. Deep learning will operate because neural networks mimic the functioning of the human brain. Nothing is explicitly programmed in deep learning. In essence, it is a class of machine learning that does feature extraction and transformation using a large number of nonlinear processing units. Each of the next layers uses the output from the one below as its input. Deep learning models are quite useful in resolving the dimensionality issue since they are able to focus on the accurate features by themselves with just a little programming assistance. In particular, when there are many inputs and outputs, deep learning methods are used. Since machine learning, a subset of artificial intelligence, is where deep learning originated, and since the goal of artificial intelligence is to mimic human behavior, so too is "the goal of deep learning to construct such an algorithm that can mimic the brain." Neural networks are used to implement deep learning, and biological neurons—basically, brain cells—serve as the inspiration for these networks. "Deep learning is a collection of statistical techniques of machine learning for learning feature hierarchies that are actually based on artificial neural networks." So, in essence, deep networks—which are nothing more than neural networks with several hidden layers—help implement deep learning.

Example of Deep Learning:

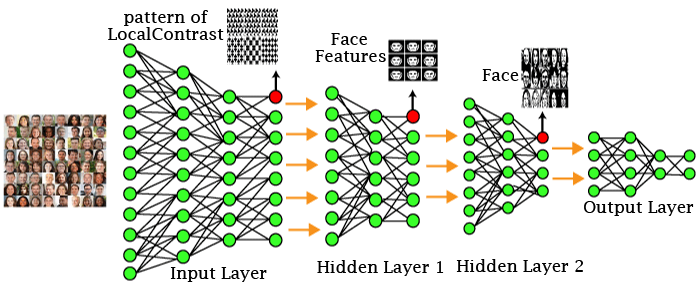

In the aforementioned example, we supply the first layer of the input layer with the raw data of the photos. The patterns of local contrast will subsequently be determined by these input layers, which means they will be distinguished based on factors such as color, brightness, etc. The first concealed layer will then decide which facial features, such as the eyes, nose, and lips, will be displayed. The correct face template will then be fixed with those facial traits. As can be seen in the above image, the proper face will therefore be identified in the second concealed layer before being delivered to the output layer. Similarly, further hidden layers can be added to handle more challenging issues, such as identifying a specific type of face with large or light-colored complexions. We can therefore tackle complex problems as and when the number of hidden layers increases.

Architectures:

-

Deep Neural Networks

It is a neural network that integrates a specific level of complexity, which means that between the input and output layers, there are numerous hidden layers. They excel in modeling and processing non-linear relationships. - Deep Belief Networks

- The Contrastive Divergence algorithm is used to learn a layer of features from observable units.

- The previously taught features are then viewed as visible units that carry out feature learning.

- Finally, the entire DBN is trained after the last hidden layer's learning is complete.

- Recurrent Neural Networks

A multi-layer belief network is what makes up a deep belief network, a subclass of deep neural networks.

Steps to perform DBN:

It supports both parallel and sequential computation, and it is identical to the human brain in every way (large feedback network of connected neurons). They are more accurate since they have the memory capacity to recall every crucial detail pertaining to the information they have received.

Types of Deep Learning Networks:

1. Feed Forward Neural Network

A feed-forward neural network, also known as an artificial neural network, prevents the formation of cycles between the nodes. All of the perceptrons in this type of neural network are arranged in layers, with the input layer receiving input and the output layer producing output. The term "hidden layers" refers to those levels that are not connected to the outside world. Each node in the layer below is connected to one of the perceptrons that are contained in that layer. It can be said that the nodes are all completely connected. There are no connections between the nodes on the same layer, either visible or invisible. The feed-forward network is free of back-loops. The back propagation technique can be used to update the weight values and reduce prediction error.

Applications:

- Data Compression

- Pattern Recognition

- Computer Vision

- Sonar Target Recognition

- Speech Recognition

- Handwritten Characters Recognition

2. Recurrent Neural Network

Another form of feed-forward network is recurrent neural networks. Each neuron in the buried layers is given an input here after a particular delay in time. The previous information from previous iterations is mostly accessed by the recurrent neural network. For instance, one needs to be familiar with the words that were used before in order to guess the next word in any sentence. In addition to processing the inputs, it also distributes the length and weights over time. It prevents the model's size from growing as the size of the input increases. The only issue with this recurrent neural network, though, is that it has a slow computing speed and does not account for any potential future input. The only issue with this recurrent neural network is that it processes data slowly and does not take into account any incoming data for the present state. It struggles to recall previous facts.

Applications:

- Machine Translation

- Robot Control

- Time Series Prediction

- Speech Recognition

- Speech Synthesis

- Time Series Anomaly Detection

- Rhythm Learning

- Music Composition

3. Convolutional Neural Network

Convolutional neural networks are a particular type of neural network that is mostly used for object recognition, picture classification, and image clustering. The creation of hierarchical image representations is made possible by DNNs. Deep convolutional neural networks are recommended more than any other neural network to attain the best accuracy.

Applications:

- Identify Faces, Street Signs, Tumors.

- Image Recognition.

- Video Analysis.

- NLP.

- Anomaly Detection.

- Drug Discovery.

- Checkers Game.

- Time Series Forecasting.

4. Restricted Boltzmann Machine

Boltzmann machines have yet another variation in RBMs. Here, there are symmetric connections between the neurons in the input layer and the hidden layer. However, the corresponding layer does not have any internal associations. Boltzmann machines, however, do include internal connections within the hidden layer, in contrast to RBM. The model can train more effectively thanks to these limitations in BMs.

Applications:

- Filtering.

- Feature Learning.

- Classification.

- Risk Detection.

- Business and Economic analysis.

5. Auto-encoders

Another sort of unsupervised machine learning algorithm is an auto-encoder neural network. Simply said, there are fewer hidden cells in this instance than input cells. However, the quantity of input cells equals the quantity of output cells. In order to force AEs to identify common patterns and generalize the data, an auto-encoder network is trained to display the output similarly to the fed input. The smaller representation of the input is mostly handled by the auto-encoders. It aids in the decompression of data and the reconstruction of the original data. This algorithm just requires that the output match the input, making it relatively simple. Encoder: Reduce the input data's dimensionality. Decoder: Put the compressed data back together.

Applications:

- Classification.

- Clustering.

- Feature Compression.

Deep learning applications

- Self-Driving Cars In self-driving cars, analyzing a vast quantity of data allows them to take in the visuals of their surroundings. They then decide whether to turn left, right, or halt. As a result, it will decide what steps to take to further reduce the incidents that occur each year.

- Voice Controlled Assistance Siri is the first thing that comes to mind when we discuss voice control help. Siri will look for and present whatever you want it to do for you, so you can tell it to do anything.

- Automatic Image Caption Generation The algorithm will operate in such a way that it will generate a caption for each image you supply. If you type "blue colored eye," an image of a blue eye will appear, along with a caption at the bottom.

- Automatic Machine Translation We can translate one language into another with the aid of automatic machine translation and deep learning.

Limitations

- It simply picks up knowledge from its observations.

- It includes bias-related problems.

Advantages

- The need for feature engineering is reduced.

- It eliminates any unnecessary expenses.

- It can quickly find complex flaws.

- Best-in-class performance on problems is the outcome.

Disadvantages

- It calls for a sizable amount of data.

- Training is highly pricey.

- It lacks a solid theoretical foundation.