Multiple Linear Regression in ML

Table of Contents:

- What is Multiple Linear Regression?

- How Does Multiple Linear Regression Work?

- Advantages

- Disadvantages

- Step-by-Step Implementation in Python

- Conclusion

What is Multiple Linear Regression?

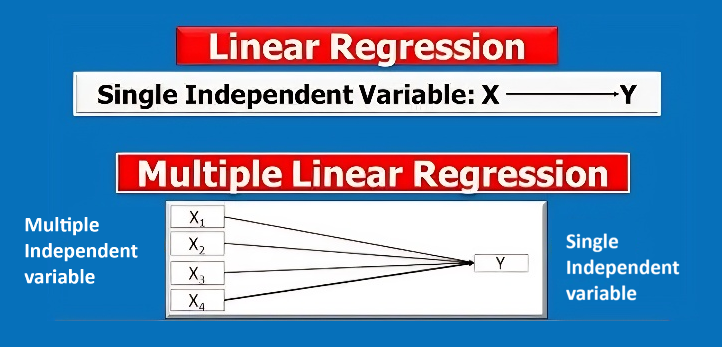

Multiple Linear Regression (MLR) is a statistical technique that models the linear relationship between a single dependent variable (y) and two or more independent variables (x₁, x₂,... xₙ). Unlike simple linear regression, which focuses on a single factor, MLR allows us to analyze the influence of multiple factors on the dependent variable.

Mathematically, it's expressed as:

y = β₀ + β₁x₁ + β₂x₂ + ... + βₙxₙ + ε

y represents the dependent variable, x₁, x₂,..., xₙ are independent variables, β₀ is the intercept, β₁, β₂,..., βₙ are the coefficients (weights) that show how each independent variable impacts y, and ε is the error term (which accounts for the variance in y not explained by the independent variables).

How Does Multiple Linear Regression Work?

In multiple linear regression, the algorithm determines the optimal values for the coefficients by minimizing the difference between the actual and predicted values of the dependent variable. This process is called Ordinary Least Squares (OLS), where the goal is to minimize the sum of squared residuals (the differences between observed and predicted values).

Advantages of Multiple Linear Regression

- Multifactorial Analysis: Allows you to understand the combined effect of multiple independent variables on the dependent variable.

- Interpretability: Each coefficient in the regression model provides valuable insights into the relationship between the independent and dependent variables.

- Predictive Power: Including multiple variables typically leads to more accurate predictions.

Disadvantages of Multiple Linear Regression

- Assumption of Linearity: The model assumes a linear relationship between the independent and dependent variables, which may not always be the case in real-world data.

- Multicollinearity: High correlation between independent variables can distort the results, making it hard to interpret the model correctly.

- Overfitting: Including too many variables can cause the model to overfit, performing well on the training data but poorly on new, unseen data.

Difference Between Simple Linear Regression (SLR) and Multiple Linear Regression (MLR):

1. Number of Independent Variables

Linear Regression (LR) involves only one independent variable, while Multiple Linear Regression (MLR) involves two or more independent variables. This distinction defines the complexity and utility of each model in real-world applications.

- LR: Models the relationship between one dependent and one independent variable.

Example: Predicting salary based on years of experience. - MLR: Models the relationship between one dependent variable and two or more independent variables.

Example: Predicting a company's profit based on R&D spend, administration expenses, and marketing spend.

2. Complexity

Linear Regression is simpler, easier to interpret, and typically requires fewer data points. MLR is more complex as it requires handling multiple independent variables and can capture relationships with more factors, leading to potentially more accurate predictions.

- LR: Simple, easy to visualize (straight line) and interpret.

- MLR: Complex, harder to visualize (requires multidimensional space) but allows modeling multiple factors.

3. Interpretability

Linear Regression is easier to interpret because it focuses on the relationship between one independent variable and the dependent variable. Multiple Linear Regression requires interpretation of multiple coefficients, which can be more challenging.

- LR: The slope of the line represents the change in the dependent variable for a one-unit change in the independent variable.

- MLR: Each coefficient represents the effect of a one-unit change in the respective independent variable while holding other variables constant.

4. Overfitting

Overfitting is more common in MLR because it includes more variables, which can fit the training data very well but perform poorly on unseen data. Regularization techniques can help prevent overfitting in MLR.

- LR: Less prone to overfitting due to fewer variables.

- MLR: More prone to overfitting, especially if many independent variables are included relative to the amount of data.

5. Multicollinearity

Multicollinearity, which occurs when independent variables are highly correlated with each other, is a concern in MLR but not in LR since LR only deals with one independent variable.

- LR: No multicollinearity because there's only one independent variable.

- MLR: Multicollinearity can distort model coefficients, making the model less reliable. It’s essential to detect and address multicollinearity in MLR.

6. Equation Form

The mathematical representation of LR and MLR differs in terms of the number of independent variables. While LR uses a single variable, MLR involves multiple variables to explain the outcome.

- LR Equation: y = β₀ + β₁x + ε, where x is the independent variable.

- MLR Equation: y = β₀ + β₁x₁ + β₂x₂ + ... + βₙxₙ + ε, where x₁, x₂, \dots, xₙ are the independent variables.

7. Visualization

LR can be easily visualized on a 2D plot as a straight line, whereas MLR involves multidimensional space, making it harder to visualize directly.

- LR: Visualized as a straight line on a 2D graph.

- MLR: Visualized in multidimensional space, which is harder to represent graphically.

8. Application

LR is used when the outcome depends on one factor, while MLR is applied when the outcome is influenced by multiple factors.

- LR: Suitable for understanding simple relationships between two variables (e.g., salary based on years of experience).

- MLR: Ideal for capturing more complex relationships involving multiple predictors (e.g., house price based on location, size, and amenities).

Summary Table:

Here’s a comparison of LR vs MLR in a table format:

| Aspect | Linear Regression (LR) | Multiple Linear Regression (MLR) |

|---|---|---|

| Number of Variables | One independent variable | Two or more independent variables |

| Complexity | Simpler, easier to interpret | More complex, harder to interpret |

| Overfitting | Less prone to overfitting | More prone to overfitting |

| Multicollinearity | No concern | Can be a concern |

| Equation Form | y = β₀ + β₁x + ε | y = β₀ + β₁x₁ + β₂x₂ + ... + βₙxₙ + ε |

| Visualization | 2D graph, straight line | Multidimensional space, harder to visualize |

| Application | Simple relationships | Complex relationships with multiple factors |

Step-by-Step Implementation of Multiple Linear Regression in Python

Let's implement Multiple Linear Regression in Python using the 50 Startups Dataset. This dataset predicts a startup's profit based on variables like R&D Spend, Administration, Marketing Spend, and State.

Step 1: Importing Libraries

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LinearRegression

from sklearn.metrics import mean_squared_error, r2_score

Explanation:

We import the essential Python libraries:

- NumPy is used for numerical operations and handling arrays.

- Pandas is a powerful library for data manipulation and analysis, particularly for loading and processing datasets.

- train_test_split from scikit-learn is used to split the dataset into training and test sets.

- LinearRegression from scikit-learn provides the methods for fitting the regression model.

- mean_squared_error and r2_score are metrics for evaluating the model's performance.

Step 2: Loading the Dataset

dataset = pd.read_csv('datasets/50_Startups.csv') # Replace with your dataset path

X = dataset.iloc[:, :-1].values # Independent variables (everything except the last column)

y = dataset.iloc[:, -1].values # Dependent variable (last column - profit)

Explanation:

- We use pd.read_csv() to load the dataset from a CSV file.

- X contains the independent variables (all columns except the last).

- y contains the dependent variable (the last column, which is the profit).

Step 3: Handling Categorical Data

from sklearn.compose import ColumnTransformer

from sklearn.preprocessing import OneHotEncoder

ct = ColumnTransformer([("State", OneHotEncoder(), [3])], remainder='passthrough')

X = ct.fit_transform(X)

X = X[:, 1:] # Avoid dummy variable trap

Explanation:

- The State column is a categorical variable, and machine learning models require numerical input.

- We use OneHotEncoder to convert the State column into numerical values (one-hot encoding).

- ColumnTransformer applies the transformation only to the State column.

- We remove one dummy variable to avoid the dummy variable trap, which can lead to multicollinearity.

Step 4: Splitting the Dataset

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=0)

Explanation:

- We split the dataset into training and test sets using train_test_split.

- test_size=0.2 means 20% of the data is used for testing, and 80% for training.

- random_state=0 ensures reproducibility of the split.

Step 5: Fitting the Model

regressor = LinearRegression()

regressor.fit(X_train, y_train)

Explanation:

- We create an instance of the LinearRegression class, called regressor.

- regressor.fit(X_train, y_train) trains the model by fitting the independent and dependent variables in the training data.

Step 6: Predicting Results

y_pred = regressor.predict(X_test)

Explanation:

- We use regressor.predict(X_test) to predict the dependent variable (profit) for the test data.

- The predictions are stored in y_pred, which we can compare to actual test values for evaluation.

Step 7: Evaluating Model Performance

mse = mean_squared_error(y_test, y_pred)

r2 = r2_score(y_test, y_pred)

print(f'Mean Squared Error: {mse}')

print(f'R-squared: {r2}')

Explanation:

- mean_squared_error calculates the average squared differences between actual and predicted values, providing an estimate of how well the model performs (lower is better).

- r2_score measures the proportion of variance in the dependent variable that can be predicted by the independent variables (values closer to 1 are better).

Conclusion:

Multiple Linear Regression is a powerful method for analyzing the relationship between multiple variables and a target variable. With proper preprocessing, splitting, and evaluation, you can use this technique to build effective predictive models. Understanding the metrics like Mean Squared Error and R-squared helps evaluate the model's performance and reliability.